One simple setting can change how your LLM responds…

Continue readingFor companies running IBM AIX who have yet to try running AIX in the cloud, I put together 3 “steps” that provide a path. This is based on my personal experience working on projects in this area.

Continue readingMany of us face the crucial challenge of leveraging the power of AI while safeguarding sensitive or private data. The importance of this task cannot be overstated, as the consequences of data exposure can be severe. We’ve all been warned not to send such data to an external LLM, yet most businesses do not have the resources to host a local LLM solution. This is where the technique of ‘data masking’ comes into play, a solution we will explore in this article.

Continue reading

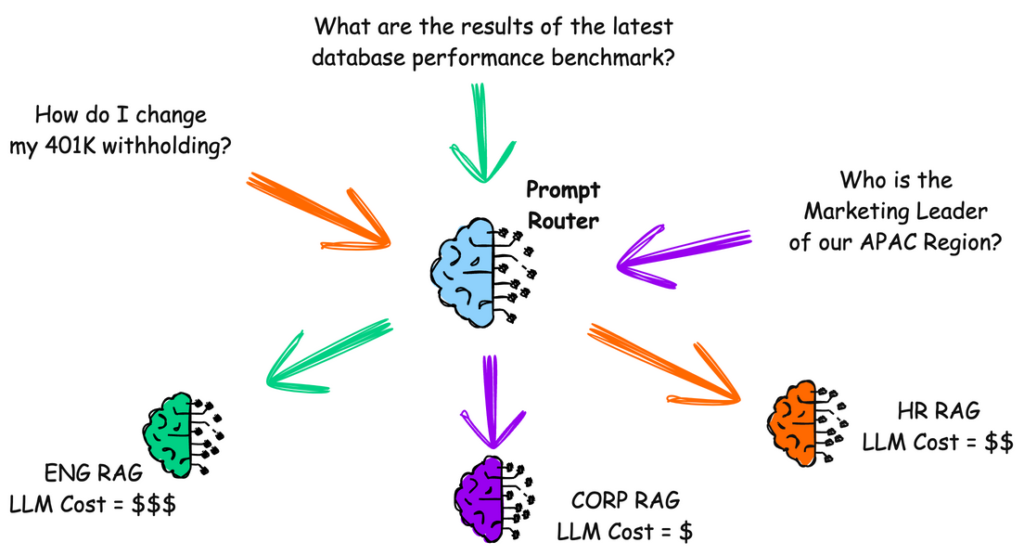

In your large company, every department wants its own LLM RAG.

Do you create a giant monolith system to handle everything?

A “Prompt Router” might help.

Continue reading

How will the application of AI change IT operations? Let’s examine one example and make one prediction for the future.

Continue readingIn the world of LLMs, someone eventually pays for “tokens.” But tokens are not necessarily equivalent to words. Understanding the relationship between words and tokens is critical to grasping how language models like GPT-4 process text.

While a simple word like “cat” may be a single token, a more complex word like “unbelievable” might be broken down into multiple tokens such as “un,” “believ,” and “able.” By converting text into these smaller units, language models can better understand and generate natural language, making them more effective at tasks like translation, summarization, and conversation.

Continue reading

Regression testing ensures that the answers obtained from tests align with the expected results. Whether it’s a ChatBot or Copilot, regression testing is crucial for verifying the accuracy of responses. For instance, in a ChatBot designed for HR queries, consistency in answering questions like “How do I change my withholding percentage on my 401K?” is essential, even after modifying or changing the LLM model or changing the embedding process of input documents.

As professionals working on AI projects, you might find this example of LLM Prompt Injection particularly relevant to your work. I’ve been involved in several AI projects, and I’d like to share one specific instance of LLM Prompt Injection that you can experiment with right away.

With the rapid deployment of AI features in the enterprise, it’s crucial to maintain the overall security of your creations. This example specifically addresses LLM Prompt Injection, one of the many aspects of LLM security.

Continue reading

Theme: Use your Power9 IBM Power resources for AI data processing tasks

By now, most have heard the saying:

“If you are not using AI, you are behind.“

For companies using IBM Power, especially Power9, which is commonplace throughout the industry, here is an idea of how you might use your existing or easily accessible cloud-based Power resources to help you jumpstart your journey to AI.

This article was authored by me and posted on my company’s website. Please read the full article there.

MGM Resorts reported an active Ransomware incident starting on September 11th, and as of September 17th, it had not fully recovered. Rumors are that the company did not pay the ransom and is “recovering” its systems.

It makes you wonder, if a company like MGM Resorts, with all of its available resources, is struggling with a ransomware attack, what does that mean for the everyday company, not on its scale? After all, cyber criminals attack companies of all sizes.

I previously wrote about the concept of using the cloud to test and perfect your malware defenses. The main point is that the cloud could be a safe way to test your preventative measures in a live sandbox environment without the risk of actual contamination.

Why didn’t MGM switch to its Disaster Recovery (DR) system? You would think it would have a mirror of its production systems, and it could “switch over” in such events. Most DR systems are designed to switch over in minutes or hours, but not days or never. There are a few possibilities. One might be that its DR system was also impacted by the attack. The other is that its DR model likely did not include shared components essential to its overall operation, which seems unlikely.

Continue to the full article at this link.